Interactive Linear Algebra

https://textbooks.math.gatech.edu/ila/

Vector, Matrix, and Tensor Derivatives | Erik Learned-Miller

https://compsci697l.github.io/docs/vecDerivs.pdf

The Matrix Cookbook

https://www.math.uwaterloo.ca/~hwolkowi/matrixcookbook.pdf

A Matrix Formulation of the Multiple Regression Model:

In the multiple regression setting, because of the potentially large number of predictors, it is more efficient to use matrices to define the regression model and the subsequent analyses.

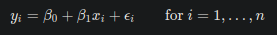

Consider the following simple linear regression function:

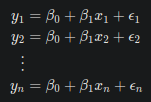

If we actually let i = 1, …, n, we see that we obtain n equations:

Well, that’s a pretty inefficient way of writing it all out! As you can see, there is a pattern that emerges. By taking advantage of this pattern, we can instead formulate the above simple linear regression function in matrix notation:

That is, instead of writing out the n equations, using matrix notation, our simple linear regression function reduces to a short and simple statement:

Where:

- X is an n × 2 matrix.

- Y is an n × 1 column vector, β* is a 2 × 1 column vector, and ε *is an n × 1 column vector.

- The matrix X and vector β* *are multiplied together using the techniques of matrix multiplication.

- And, the vector X*β** is added to the vector ε *using the techniques of matrix addition.

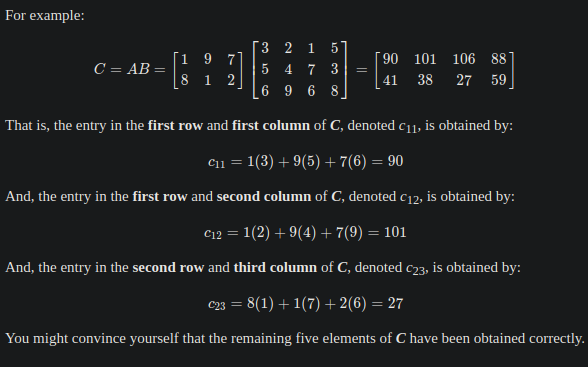

In matrix multiplication, there are some restrictions. You can’t just multiply any two old matrices together. Two matrices can be multiplied together only **if **the number of columns of the first matrix equals the number of rows of the second matrix. Then, when you multiply the two matrices:

- The number of rows of the resulting matrix equals the number of rows of the first matrix

- The number of columns of the resulting matrix equals the number of columns of the second matrix.

You should also exploit transposed matrices to bypass problems with incompatible matrices format, that cannot be multiplied. Transpose matrices help with the mechanics of matrix multiplication. In a linear model, if I have 3 inputs and 3 weights, I might represent those as two 3 x 1 vectors/matrices. However, you can’t multiply a 3 x 1 vector by another 3 x 1 vector. You can however, multiply a 1 x 3 vector (a transpose) by a 3 x 1 vector, which will give you a 1 x 1 vector (a scalar).

Recall that between two vectors u and v, **u * v_T** is equal to the dot product between **u **and v. When talking about matrices, the “dot product between matrices” can be understood as matrices multiplication.

https://www.youtube.com/playlist?list=PL221E2BBF13BECF6C