NORMAL INITIALIZATION

This method is simple, the weights are generated randomly and then multiplied by a small value (tipically 0.01 or 0.001) to ensure that the weights values starts inside the sigmoid function range (0 to 1)

self.weights = np.random.randn(input_units, output_units) * 0.01XAVIER’S INITIALIZATION

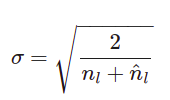

On Xavier’s initialization algorithm the random generated weights are multiplied by the standard deviation given by:

Where nL is the number of inputs on the dense layer and n^L is the number of outputs of the dense layer.

self.weights = np.random.randn(input_units, output_units) * np.sqrt(2 / (input_units + output_units))Another pure numpy implementation:

var_W = 2 / (input_units+output_units)

self.weights = np.random.normal(scale=np.sqrt(var_W), size=(input_units, output_units))The “scale=np.sqrt(var_W)” is done since the “np.random.normal” takes standard deviation, and I calculated variance. Variance is standard deviation squared.

https://stats.stackexchange.com/questions/309231/xavier-initialization-formula-clarification